NBA Regular Season Wins Prediction Based on New Metrics in Machine Learning

- Danni Danni

- Sep 26, 2024

- 14 min read

Updated: Oct 24, 2024

Abstract: In recent years, data analysis has revolutionized the way basketball is understood and managed, with the NBA leading this transformation. The development of advanced metrics and machine learning tools has enabled more accurate evaluations and predictions in the sport. Despite the success of current metrics like the Player Efficiency Rating (PER) and Pythagorean Wins (PW), they often fail to provide a complete picture due to their reliance on production data, which can lead to data leakage. This study aims to introduce new efficiency-based metrics that mitigate the risk of data leakage and improve the accuracy of predicting NBA regular season wins. The new metrics adjust traditional statistics by incorporating the team's pace and comparing them to opponents, ensuring a more reliable prediction framework. Using machine learning models such as K-nearest neighbors, random forest, gradient boosting regression, and support vector regression, the study evaluates the predictive accuracy of the new metrics against traditional data. Results indicate that the new metrics significantly enhance prediction accuracy, particularly in random forest and gradient boosting models. This research provides a more robust methodology for predicting team performance, aiding coaches, scouts, and management in their decision-making processes.

Key Words: NBA regular season wins prediction; new metrics; machine learning; sports data analysis

Contents

1. Introduction

1.1 Background

1.2 Research Objectives

2. Methodology

2.1 Data

2.1.1 Data Collection

2.1.2 Data Cleaning

2.2 New Metrics

2.3 Modeling

2.3.1 Results

2.3.2 Stability Test

2.3.3 Model Evaluation

2.3.2 Interpreting

3. Conclusion

4. Appendix

4.1 NBA Terms

4.2 Inc Node Purity in Random Forest

5. References

1 Introduction 1.1 Background

As data analysis has become ever popular and useful across a variety of industries, NBA is no exception. In the past, coaches and audiences had to rely solely on their experience to choose team starters, make tactics, and keep track of players' health conditions. People used to cast doubt on the credibility of predicting complex games through simple data. However, after the publication of Moneyball by Michael Lewis in 2003, crowds shifted their view. The book proved how computers and statistics successfully built a competitive team with a limited budget. In addition, the development of motion tracking technology(for example, sportVU, a system with multiple cameras can accurately capture movements of players and the basketball) allows the NBA to collect tons of motional data on the courts. As a result, data analysis gets to prevail in the basketball world.

Breakthroughs in data analysis changed the way people interpret basketball. The first breakthrough was the invention of advanced data. At the beginning of sports analytics, people could only make rough evaluations of players based on basic data(such as points, rebounds, turnovers, etc). However, data analysts in the NBA invented PER, WS, offensive/defensive efficiency, and other advanced metrics. These metrics are calculated by basic data, but dig way much further. The data reveals players' personal skills, tactical execution, and even intuition. The second breakthrough was that through advanced tools such as AI and machine learning, team management has been able to make predictions on wins or make immediate adjustment of game tactics.

In the NBA, data is drawn to serve various purposes. The three most commonly used fields are developing tactics, managing the team, and evaluating players. First, with the help of analysis tools, coaches can grip strengths and weaknesses of the opponent team thus providing specific adjustments to win the game. The second application is to evaluate players. It could be misleading to merely judge an individual player's performance with one set of eyes. The whole

3

team's performance, the strengths of the opponents, and the condition of the player that game day: It's difficult for a scout to take all these into consideration. The recruiting process has become a laborious and intensive process that has yielded sometimes biased conclusions on potential stars. However, data analysis appears to be the ideal scout nowadays. Lastly, an application of data analysis is the management of the whole team. Through the analysis of historical and real-time data, the team's performance can be predicted in advance. Evaluation of the team is always the final goal of sports analysis. Evaluation of players only serves this aim. Besides, the team management can adjust its ticketing, advertising, and commercializing strategies based on the team's performance.

1.2 Research Objectives

However, current metrics in the NBA don’t always show the full picture. Let's take PER for instance. What is PER?

The Player Efficiency Rating (PER) is an advanced basketball statistic created by John Hollinger. It is designed to assess a player's statistical accomplishments. PER takes into account a player's positive contributions (such as points, rebounds, assists, and steals) and negative actions (like missed shots, turnovers, and personal fouls). In addition, PER is adjusted to the pace of the season and thus can compare players from different eras. It allows for a comprehensive evaluation of a player's overall efficiency on the court.

The metric of PER is very complex. Firstly, uPER(unadjusted PER) is calculated, which converts data in total into data per minute and puts different weight on the data. Secondly, aPER(adjusted PER) is calculated, which is able to compare players in different eras by adjusting the data according to league paces. Thirdly, PER is calculated based on uPER and aPER, playing a role of standardizing.

Of course, you don't have to fully understand the complex formulas, but still, it's essential to know basic terms in the basketball world to continue our discussion, which you can refer to in the appendix.

Let's look at the following example in a specific season:Player A drops 27.5 points, 6.3 rebounds, 3,8 assists, 1.9 steals, 0.8 blocks, and 2.2 turnovers, with 52.9% 2-point goal percentage and 38% 3-point goal percentage per 36 minutes;player B drops 25.2 points, 8.2 rebounds, 8.3 assists, 1.2 steals, 0.6 blocks, and 3.9 turnovers, with 61.1% 2-point goal percentage and 36.3% 3-point goal percentage per 36 minutes.

From all perspectives, you'll conclude that player B is the more versatile player. To have a better understanding of that fact, let's make a radar chart to reinforce the idea.

Our intuition is obviously right. However, PER, an advanced metric of comprehensive evaluation of players, favors player A to player B. In fact, player A is Kawhi Leonard; player B is LeBron James; both data is collected from season 2016-2017. What’s wrong with PER?

Here’s another example in season 2023—2024:Ayo Dosunmu drops 14.9 points, 3.5 rebounds, 4 assists, 1.1 steals, 0.6 blocks, and 1.6 turnovers, with 56.9% 2-point goal percentage and 40% 3-point goal percentage per 36 minutes;Bogdan Bogdanović drops 20.2 points, 4.2 rebounds, 3.7 assists, 1.3 steals, 0.5 blocks, and 1.6 turnovers, with 50% 2-point goal percentage and 37.6% 3-point goal percentage per 36 minutes.

In this season, Ayo has a PER of 13.3, and Bogdan has a PER of 14.8. The difference of their PERs is 1.5. Let have a look at basic stats from radar charts:

We conclude that there is only a slight difference between their stats. And such a difference in basic stats is enough to result in a difference of 1.5 in PERs. However, let’s compare Russel Westbrook’s performance in season 2014-2015 to that in season 2016-2017.

It is clear from the radar chart that Russell has much better performance in season 2016-2017 than season 2014-2015. But surprisingly, PER of Russell in season 2016-2017 is 30.6, 29.1 in season 2014-2015. The difference in Russell’s PERs is exactly 1.5, the same as that of Ayo and Bogdan.

It seems that the progress made by Russell in PER does not match the progress in his stats. What’s the relative disadvantage of Russell? We spot his relative higher turnover rate and lower shot percentage than Ayo and Bogdan. However, that’s mainly because Russell shoots a lot more than the former. Russell made 842 baskets in season 2016-2017, and 647 in season 2014-2015. By contrast, Ayo made 339 baskets and Bogdan made 428 baskets in season 2023-2024.In the basketball world, no player can make a higher shot percentage with more shots.

In conclusion, players who make less mistakes are unreasonably favored by PER. They have higher PERs than players who have more versatile data but make more mistakes. In addition, in a same season, players in a slow-paced team tend to have higher PERs:

We conclude from the formula that when LgPace is constant, the lower the LgPace, the higher the aPER(and thus higher the PER). The shortcomings of PER and other metrics inevitably mislead people’s evaluation of players, leading to a distorted assessment of the entire team based on individual performances. This raises the question: What are the issues with current methods of predicting seasons wins? Is it possible to predict season wins more accurately through a different set of criteria? By exploring various team statistics, this study aims to develop a precise prediction of NBA regular season wins.

2 Methodology

2.1 Data

2.1.1 Data Collection

All data comes from the NBA. The data includes Team_Toals, Opponent_Totals, Team_Summaries, and Per36Mins.

Team_Totals includes 1845 entries and 28 columns. The columns are: season, league, team, abbreviation, playoffs, g, mp, fg, fga, fg%, x3p, x3pa, x3p%, x2p, x2pa, x2p%, ft, fta, ft%, orb, drb, trb, ast, stl, blk, tov, pf, pts.

Opponent_Totals includes 1845 entries and 28 columns. The columns are: season, league, team, abbreviation, playoffs, opp_g, opp_mp, opp_fg, opp_fga, opp_fg%, opp_x3p, opp_x3pa, opp_x3p%, opp_x2p, opp_x2pa, opp_x2p%, opp_ft, opp_fta, opp_ft%, opp_orb, opp_drb, opp_trb, opp_ast, opp_stl, opp_blk,opp_tov, opp_pf, opp_pts.

Team_Summaries includes 1845 entries and 31 total columns. The columns are: season, league, team, abbreviation, playoffs, age(mean age), wins, losses, pw, pl, mov, sos, srs, o_rtg, d_rtg, n_rtg, pace, f_tr, x3p_ar, ts%, e_fg%, tov%, orb%, ft_fga, opp_e_fg%, opp_tov%, opp_drb%, opp_ft_fga, arena, attend, attend_g.

Per36Mins includes 31853 entries and 34 columns. The columns are: season id, season, player id, player, birth year, position, age, experience, league, team, g, gs, mp, fg, fga, fg%, x3p, x3pa, x3p%, x2p, x2pa, x2p%, ft, fta, ft%, orb, drb, trb, ast, stl, blk, tov, pf, pts.

2.1.2 Data Cleaning

First, this study only includes the data from season 1981-1982 to season 2023-2024.The three- point line was introduced from the ABA in season 1980-1981. At that season, teams considered.

the three-point line as a temporary experiment, so players didn’t attempt much three-pointers. But since season 1981-1982, the three point line is set permanently and widely-accepted. Season 2023-2024 only completed about 70 games at the time the report is written, which is harnessed in the stability test.

Second, I remove some columns in the datasets.For the Team_Totals dataset, this study only keeps these columns: fg%, x3p%, x2p%, ft%, orb, drb, ast, stl, blk, tov, pf.For the Opponent_Totals, this study only keeps opp_fg%, opp_x3p%, opp_x2p%, opp_ft%, opp_ orb, opp_drb, opp_ast, opp_stl, opp_blk, opp_tov, opp_pf.For the Team_Summaries dataset, this study only keeps these columns: w, orb%.

Third, since some NBA teams changed their names through history, I change the names in my datasets into the team names now. That is:

Seattle SuperSonics(SEA) --- Oklahoma City Thunder(OKC) Kansas City Kings(KCK) --- Sacramento Kings(SAC) San Diego Clippers(SDC) --- Los Angeles Clippers(LAC) Washington Bullets(WSB) --- Washington Wizards(WAS) New Jersey Nets(NJN) --- Brooklyn Nets(BRK) Charlotte Bobcats(CHA) --- Charlotte Hornets(CHO)

Fourth, in order to apply our machine learning model, the data is split into training and testing data. The training set and test set are split in chronological order; the newest 25% in terms of time is the test data, while the oldest 75% is the training data.

2.2 New Metrics

The current predictive metric of regular season wins is Pythagorean Wins(PW). The metric estimates team’s expected wins based on its points scored and allowed over a given period. The fundamental premise underlying PW asserts that a team’s winning percentage can be approximated using a formula derived from the Pythagorean theorem. Formally expressed as(Here, k represents an exponent typically around 13.91 for basketball):

The accuracy of PW is extremely high. We can tell it from its MAE(Mean Absolute Error):

MAE of PW = 2.37405

The result indicates that the average prediction error of PW is around 3 games. Since PW is already so accurate, why attempt to predict the number of wins? Let me explain.

The principle of choosing predictors is the nonexistence of data leakage. In machine learning models, data leakage refers to the situation where information from outside the training dataset is used to create the model. This can lead to overly optimistic performance estimates during model training and evaluation. To prevent data leakage, it’s essential to ensure that the training data strictly represents the information that would be available at the time of prediction, maintaining the integrity and reliability of the machine learning model. For example, it’s risky to provide models points data, because the team who wins the most probably scores the most points. The data should be defined into two categories: one indicating production and the other reflecting efficiency. We should not use production data, especially scoring-related data such as three- pointers made (3PM), which directly influences team scoring. For instance, teams with higher 3PM scores more three-pointers, increasing their total score and likelihood of winning games. By contrast, only efficiency data, such as three-point percentage and offensive rebounding rate, should be used as predictors. This approach helps mitigate the risk of data leakage. So, through the PW formula, it can be seen that PW calculations are directly based on total points scored by the team and total points allowed, which fall under production data. This poses significant risk of data leakage.

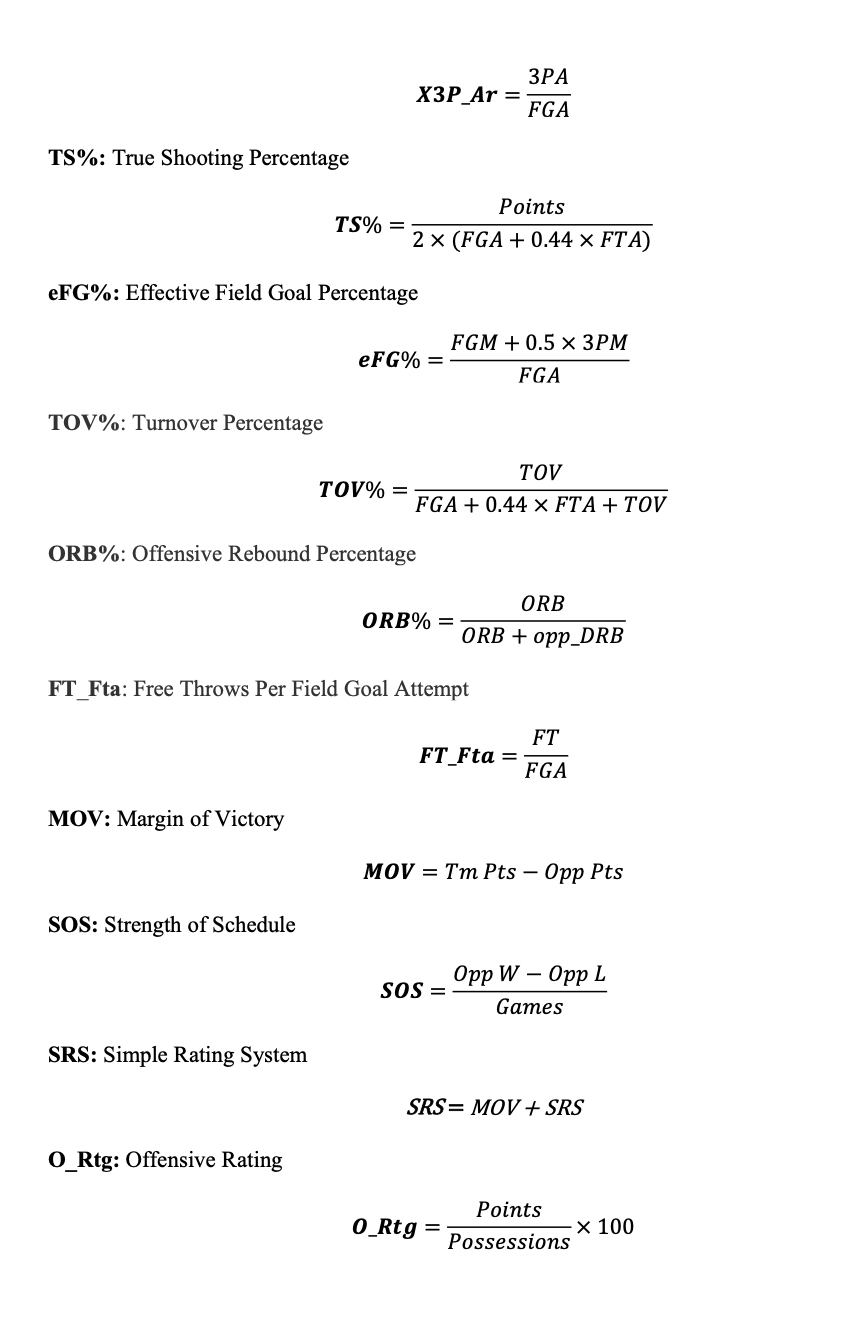

Using the same logic, we must also discard MOV, SOS, SRS, O_Rtg, D_Rtg, N_Rtg, TS%, and eFG%. These metrics directly utilize scoring production data such as Tm PTS, and some even directly use win-loss records, which we can tell from their formulas:

This study aims to invent new metrics to capture the essence of the game more accurately. We will compare the accuracy of machine learning models trained using these newly developed metrics against those trained with basic, traditional data to determine whether there is a significant improvement. The fundamental idea underpinning this research is that in basketball, as in any other sport, the higher a team's production, the more challenging it becomes to maintain efficiency. One of the core metrics we consider is pace, which represents the number of offensive possessions a team has per 48 minutes. Pace serves as an efficiency metric that reflects a team’s offensive tempo, in which we don’t risk data leakage but take the team’s production level into consideration. Typically, a team's pace value fluctuates around 100, indicating the average number of possessions per game. To normalize this metric, we use 0.01 times the pace as an adjuster, resulting in a value close to 1. We hope this adjustment allows for more precise prediction on team winning. The adjusted metrics are as follows:

2.3 Modeling

In this study, we will employ K-nearest neighbors regression, random forest, gradient boosting regression, and support vector regression for predicting the number of wins. Subsequently, we will evaluate the prediction accuracy and model stability to select the final model for win prediction.

2.3.1 Results

We first train the model using a training set constructed with new metrics and obtain its MAE(We are using MAE here because the highest regular season win in NBA history is 73, and it’s not an outlier, so there is no need to use MSE). Then, we train the model using a training set constructed with traditional data and obtain the fitting accuracy. Finally, we take the natural logarithm of the ratio of the accuracy from the two training sessions to evaluate the model’s improvement.

The features(columns except wins) in traditional train data are: X3P%, X2P%, FT%, BLK, PF, opp_X3P%, opp_X2P%, opp_FT%, opp_BLK, opp_PF, TOV%, STL%, ORB%, DRB%, AST%, opp_AST%.

The features(columns except wins) in new-metrics train data are: aORB%, aDRB%, aTOV%, Pace, aSTL%, aAST%, aFG%, aTOV%_ratio, Pace_ratio, aSTL%_ratio, aAST%_ratio, and aFG%_ratio.

We can plot a grouped bar chart to visualize the difference. Remember, the lower MSE and MAE, the more accurate the model is.

From the plot, we conclude that based on new metrics, random forest and gradient boosting regression significantly reduced the error; Knn regression slightly reduced the error; Surprisingly, the error in support vector regression slightly increased for the new metrics. A possible explanation is that the default value of ε (epsilon) =1 parameter in support vector regression means the model has a relatively narrow ε-insensitive tube. which lead to a situation where the model closely fits each data point in the training set, but struggle to generalize well to unseen data, increasing the risk of overfitting.

2.3.2 Stability Test

In order to give a comprehensive evaluation of the model, we’ll conduct a stability test. The stability test involves determining whether the model has potential overfitting risks by reducing the sample size. We will retrain and test the models using half of the previously used test and training data (which still adhere to the chronological order because prediction should strictly follow time order). We continue to represent the relative change in model accuracy using the natural logarithm of the MAE ratio. Remember in this case, the lower the relative difference of MAE, the more stable the model is.

2.3.3 Model Evaluation

Random forest achieved the highest prediction accuracy. Gradient boosting regression and support vector regression followed closely. After conducting the stability test, gradient boosting regression proved to be the most stable, with a negligible difference from random forest; these two models exhibited nearly identical prediction accuracy(with MAE 5.95 and 6.06) with 0.5 times the sample size. Although support vector regression displayed good accuracy, it’s significantly unstable especially compared to lower than that of random forest and gradient boosting regression. K-nearest neighbors regression showed the poor prediction accuracy and stability, suggesting it may not be suitable for our modeling. In summary, we select the random forest model as the final model for predicting NBA regular season wins.

2.3.4 Interpreting

The inc node purity plot demonstrates the contribution each feature makes to the random forest model’s predictions(The higher the inc node purity, the greater the feature’s contribution).

In overall, we observe that the most notable feature in the chart is that the relative differences in the same metrics between teams and their opponents contribute more to the model than the metrics themselves (except for aTOV%, whose inc node purity is almost equal to its ratio). This is reasonable because victory requires outperforming the opponent. Specifically, the most significant contribution is from aFG%_ratio, indicating that the key to winning lies in the difference in overall shooting efficiency between the two teams (here, “overall” also considers the team’s pace and the proportion of possessions taken by free throws). Next is Pace_ratio, showing that the number of possessions per unit time for both teams affects win prediction, possibly because differences in pace greatly impact the team’s regular tactics and stamina distribution. The third most significant contributor is aAST%, highlighting that smoother team cooperation and offense compared to the opponent significantly contribute to winning in modern basketball. The fourth is aSTL%_ratio, reflecting a team’s strong defensive style. Compared to blocks, steals can directly lead to a transition from defense to offense, clearly disrupting the offensive rhythm and team coordination of the opponent.

Given the significant drop in inc node purity between the fifth and fourth values, the contribution beyond the fourth-ranked value to the model is not high. Therefore, we provide partial explanations for only a few values. Without considering the relative differences between the team and the opponent, aFG_Percent is just a numerical value, but maintaining a reasonably high shooting percentage is more important than controlling turnovers and rebounds for winning a game. ORB% and DRB% have low contribution values, possibly because they are not necessarily related to shooting efficiency and team cooperation or have minimal impact on the opponent’s pace. Given the high contribution value of aSTL%_ratio, a potential explanation for the lower ranks of aTOV% and aTOV_ratio is that the proportion of turnovers not caused by the opponent’s steals is relatively high, leading to a lack of proportionality between steal-related data and turnover-related data. It is also possible that aSTL% reflects strong team defense, while TOV% is neither a defensive efficiency indicator nor necessarily related to low overall shooting efficiency.

3 Conclusion

This research introduces a novel approach to NBA win prediction by developing new efficiency metrics that mitigate data leakage issues inherent in traditional metrics like PER and PW. By incorporating adjustments for team pace and opponent comparisons, our proposed metrics offer a more nuanced evaluation of team performance. The application of these metrics in machine learning models, particularly random forest, has demonstrated significant improvements in predictive accuracy.

The findings highlight the critical role of efficiency-based metrics in enhancing the reliability of NBA win predictions. These metrics provide a comprehensive assessment of team performance, offering valuable insights for coaches, analysts, and management in their strategic decision- making processes. The enhanced predictive accuracy achieved through these metrics underscores their potential to revolutionize performance evaluation in professional basketball.

Future research should explore further refinements of these efficiency metrics and their application across different contexts within basketball analytics. Additionally, investigating the integration of these metrics with other advanced analytical techniques could yield even more robust predictive models, paving the way for more sophisticated approaches to understanding and managing team performance in the NBA.

4 Appendix

4.1.1 NBA Terms

Tm (prefix): Statistics of the TeamLg

(prefix): Statistics of the LeagueOpp

(prefix): Statistics of the Opponent Team

G: Games Played

MP: Minutes Played

Pos: Position

3PM/X3P: 3-Point Field Goals

2PM/X2P: 2-Point Field Goals

AST: Assists

FGM: Field Goal Made

FGA: Field Goal Attempts

FTM: Free Throw Made

FTA: Free Throw Attempts

TOV: Turnovers

DRB: Defensive Rebounds

ORB: Offensive Rebounds

TRB: Total Rebounds

BLK: Blocks

STL: Steals

PF: Personal Fouls

W: Wins

l: Losses

AST%: Assist Percentage

5 References

1.Tomasz,Z., Kazimierz,M., Pawel,C., Marek, K., Michal, K., and Piotr, M.. Long-Term Trends in Shooting Performance in the NBA: An Analysis of Two- and Three-Point Shooting across 40 Consecutive Seasons

2.Ehran, K.. Advanced NBA Stats for Dummies: How to Understand the New Hoops Math

3.Kubatko, J., Oliver, D., Pelton, K., and Rosenbaum, D.T.. A starting point for analyzing basketball statistics. Journal of Quantitative Analysis in Sports

Comments